Why Intel® Xeon® Processors Pivoted to AI

Artificial intelligence is everywhere. Even a company like McDonald’s—not typically associated with cutting-edge tech—is investing in AI, because it wants to offer each customer a personalized menu.

That’s why the 2nd Generation Intel® Xeon® Scalable Processor (formerly codenamed Cascade Lake) has a heavy focus on AI and machine learning. Specifically, the CPUs boast the brand-new Intel® Deep Learning Boost (Intel® DL Boost), which can accelerate performance of these workloads up to 4X compared to previous-generation processors.

Under the Hood

At the heart of Intel DL Boost is Intel® AVX-512 Vector Neural Net Instructions (Intel® AVX-512 VNNI). These four new instructions accelerate the inner loops of convolutional neural networks. How? By collapsing three instructions into a single operation (Video 1).

Other new features include support for Intel® Optane™ DC Persistent Memory—which can dramatically increase the total amount of addressable RAM per system—as well as new security enhancements against side-channel exploits. Plus, the new chips are drop-in compatible with the previous generation, making it easier to deploy all the new capabilities.

Computer Vision, Medical Imaging

Features like DL Boost offer significant upside for computer vision. Intel 2nd Generation Xeon Scalable processors can perform real-time inference at the edge, or can handle deeper analysis in a server back end. Object identification and classification, facial recognition, traffic monitoring, and data analytics all run more quickly on the new hardware.

The processors have advantages in medical imaging as well. They can accelerate analysis, improve accuracy, and even reduce patient exposure to radiation by reducing the number of image captures.

GPUs are often used in this area, but these solutions face significant memory constraints. Medical images can already exceed 1GB, and they continue to grow. Such large files can exceed on-board VRAM capacities of GPU cards.

With their support for Intel Optane DC Persistent Memory, the new Intel Xeon processors overcome this limitation. Supporting up to 4.5TB (1.5TB of DRAM + 3TB of Optane), systems based on this new architecture have no problem handling large images.

These are of course just a few use cases for AI. Other applications can be found in smart factories, oil and gas fields, energy grids, and more.

Get a Quick Start

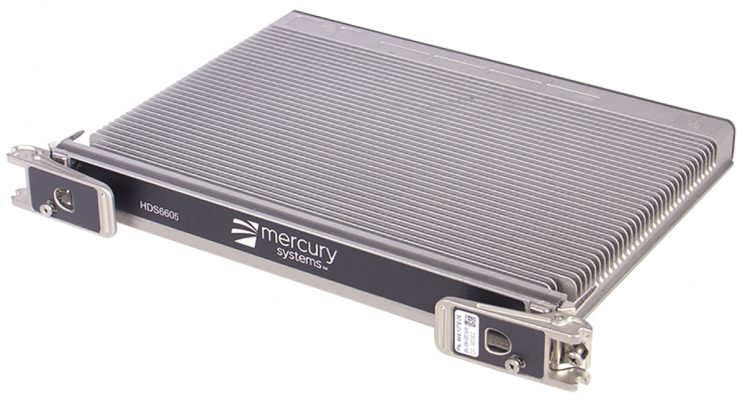

Developers interested in deploying these capabilities quickly can choose from a number of off-the-shelf systems. For example, Mercury Systems offers the new CPU in its 6U OpenVPX EnsembleSeries HDS6605 (Figure 1). This server uses a 22-core Intel Xeon Gold 6238T Processor, with a 1.9GHz base clock, 3.7GHz Turbo Boost, and a 125W TDP.

One of the unique characteristics of the HDS 6605 is the security and durability features Mercury designs into the product. For example, Mercury converts the CPUs from an LGA3647 socketed interface to a soldered BGA interface. Moving to BGA allows Mercury to offer higher performance in smaller, highly ruggedized form factors.

It’s not unusual for companies to offer cutting-edge features on data-center platforms. But the HDS6605 brings these capabilities to nearly any environment by ruggedizing every aspect of the server design. It will be interesting to see how developers leverage this go-anywhere AI and machine learning performance.

Target workloads for the HDS6605 include image recognition, radar processing, and sensor fusion. Sensor fusion refers to combining data from multiple sensors—such as visual spectrum cameras, infrared cameras, radar, and other sensor packages.

An AI Game Changer

The adoption of AI and machine learning are already growing fast. Mercury expects the AI processing capabilities of the new Intel Xeon, combined with the platform’s long lifecycle, to supercharge this trend.

Cascade Lake’s performance improvements in AI inferencing workloads can make the difference between processing data directly in real time and making decisions as events are happening versus performing the same analysis off-site, after the fact.

The AI and deep learning features supported by 2nd Generation Xeon Scalable Processors allow forward-thinking adoptees to take advantage of these capabilities as they come online in the future. From improved facial and speech recognition to using AI to diagnose disease, the potential scale and scope of improvements is enormous.

Developers wanting to explore AI should investigate the Intel® Distribution of the OpenVINO™ toolkit. It supports deep learning training models created in BigDL, Caffe, MXNet, and TensorFlow, among others. Once models are built and trained, developers can deploy them on 2nd Generation Xeon Scalable CPUs to take advantage of DL Boost inferencing gains.