Machine Vision Needs to Be Easier

Before a manufacturer can adopt Industry 4.0 principles, they must level up their worker’s skills.

This is no small challenge. A 2018 Deloitte report showed that only 47% of organizations believe they are doing enough to create a workforce for Industry 4.0. The same report shows that organizations want to train existing employees rather than hire new ones—so it’s crucial for manufacturers to find a path to Industry 4.0 that minimizes the need for training.

Machine Vision Requires Special Skills

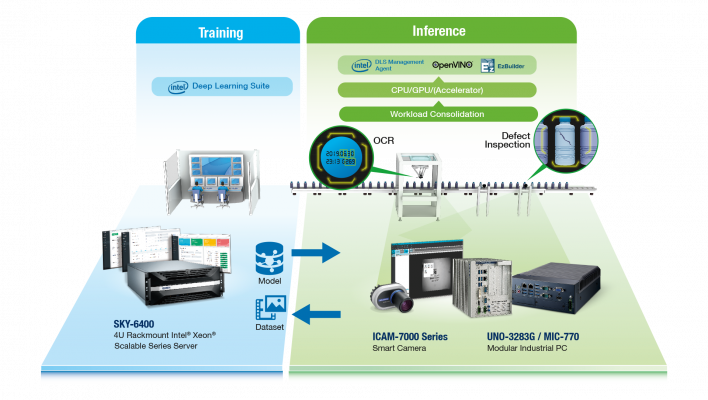

Machine vision is a prime example of the issues at hand. As illustrated in Figure 1, a machine vision system involves an array of sophisticated hardware and software, along with complex techniques to set up and monitor the system. Key elements of such a system include:

- Training–Existing image data is used to construct models of objects and their properties within a high-performance server environment.

- Inference–The trained models are deployed on ruggedized systems within a factory environment, where they can assess new images (for example, recognizing text or detecting defects).

- Retraining–If there are changes to the factory environment, such as deviation in optics, lighting, or product specifications, the data distribution may change. A retraining process collects new images, and then updates the model.

Each step in this process requires specialized knowledge. For example, a developer might know how to train a model but not know about variations in factory conditions. Conversely, operations staff might be experts on manufacturing defects and their causes but lack programming skills.

Simplified Approach to Machine Vision

To address this skills gap, companies like Advantech are creating end-to-end solutions (Figure 1) that help programmers and operations staff meet in the middle. We recently spoke with Neil Chen, a machine vision senior manager, and Alex Liang, a machine vision product manager, about this new approach.

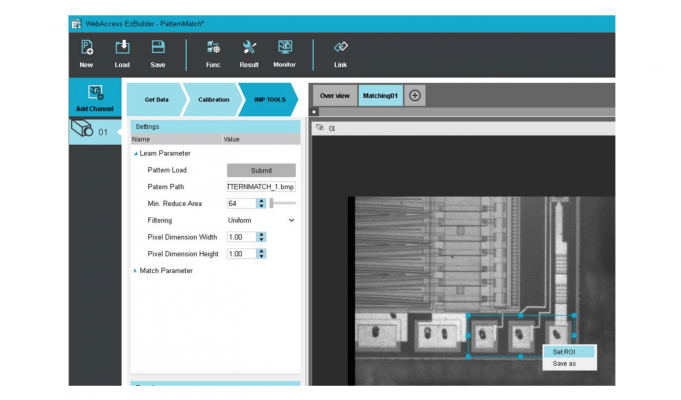

“We understood how simplicity would make the process much easier for our users,” said Chen. “That’s why we designed the EzBuilder training software and its graphical user interface so that workers without any programming skills could rapidly build and deploy machine vision applications—generate models, handle labeling, or input the product—throughout the entire deep learning process.” Figure 2 illustrates how the straightforward tool can be used by non-programmers.

Another aspect of how the company simplified the deep learning process is by integrating a smart Intel® FPGA-powered camera and the Intel® Distribution of OpenVINO™ toolkit into the solution.

“A benefit of OpenVINO,” said Laing, “is that one program can be written and then, depending on the workload, run on various pieces of Intel® hardware. For example, the same deep learning model can be run on different Intel CPUs, such as Intel® Xeon®, Intel Atom®, and Intel® Core™ processors, and the Intel® Deep Learning Inference Accelerator using the same upper layers of the software. And with just one command line difference, the program can be set to different targets.”

Machine Vision in the Real World

As illustrated in Figure 3, enabling tools and machines to accurately and rapidly handle routine production tasks requires developing a machine vision system that can do the following:

- Reliably identify a wide range of objects within process chains

- Increase the efficiency and reduce the complexity of workflows

- Automate and speed up production

Optical character recognition (OCR) makes it possible to accurately and rapidly recognize and read alphanumeric texts on labels, materials, parts, and finished goods. This is necessary to ensure traceability throughout manufacturing and distribution processes.

The system, according to Chen, must overcome OCR challenges related to different fonts, languages, size and color of text, and distortion in the production line. “Deep learning-based OCR,” said Chen, “provides a new approach to solving OCR challenges through image labeling, training, and inferencing, as well as by simplifying processes and increasing reliability on the factory floor. The retraining process makes the OCR system more accurate and adaptive.”

Defect inspection is another task that machine vision can enhance. “Given the unpredictability of features in the quality assurance process, the machine vision system must be sharp enough to accurately inspect for defects,” said Laing. “And if the system doesn’t possess a high level of refinement,” added Chen, “the automatic optical inspection (AOI) system can miss defects, or tag perfectly made parts or products as defective. What we call underkill and overkill.”

The deep learning technology found in the Advantech solution can improve AOI systems, fine-tuning them through training, inference, and retraining to accurately tag defective items for various kinds of flaws while avoiding errors.

Both Chen and Laing commented on how machine vision can improve workpiece positioning and guidance. “High-speed production lines, verification, robot-guided pick and place, and other tasks require machine vision-enabled positioning tools, locators, and pattern finders,” said Laing. “This is needed,” said Chen, “to recognize and determine the exact position and orientation of parts. This in turn is used to position tools for inspection or other jobs. The data can also be inputted into handling devices.”

Consensus Is Essential to Attaining Industry 4.0

Manufacturers are under pressure to transition their existing facilities to Industry 4.0 to achieve gains in productivity and efficiency while reducing cost. To make this happen and create harmony across the enterprise, it is crucial to educate IT, OT, and other stakeholders on the challenges that each will need to overcome.

Those evaluating machine vision technologies are advised to seek solutions that can be deployed without widespread retraining or hiring. Naturally, businesses also look for solutions that offer speed and reliability, and that generate ROI. And solutions that offer maximum flexibility and capability are a wise choice for manufacturers looking to future-proof their investment.