Fill form to unlock content

Error - something went wrong!

Get the latest on IoT and network edge for manufacturing.

You are following this topic.

Why Software Is Playing Catchup to Edge Computing Hardware

The embedded computing industry continues to cycle between having the hardware lead versus having the software lead. Members on each side of the engineering wall take a turn, then wait for their counterparts to catch up in terms of feature sets.

Right now, the hardware is ahead with CPUs like 12th Gen Intel® Core™ processors, which provide the infrastructure to optimize different edge and enterprise workloads on the same system thanks to a mix of Performance and Efficiency cores. Especially when you pair these processors with embedded hardware that can accommodate higher performance and higher thermal design power (TDP) devices like PICMG COM-HPC modules, multiple software workloads can be consolidated onto a single hardware target.

Now it’s time for the software foundation to help scale that advanced hardware. And that advance can really come only in the form of a hypervisor.

Latest Core Processor Enables Workload Consolidation

Basically, workload consolidation unites multiple operations onto fewer platforms, thereby optimizing operations and making the platforms more scalable. This usually means that deterministic applications run on one core while enterprise or AI cores run on others. That separation helps maximize performance while also keeping the critical assets more secure. It also provides higher reliability, as fewer components mean fewer points of failure, while reducing the total system cost.

But note that original equipment manufacturers (OEMs) and systems integrators must employ software stacks in a way that capitalizes on the benefits of workload consolidation, which means maximizing system partitions. The amount of partitioning is typically based on the needs of the end application, including the use of a hypervisor, which builds on a microprocessor’s multi-core architecture. In particular, the Real-Time Hypervisor from Real-Time Systems (RTS), tuned for Intel Atom®, Intel® Core™, and Intel® Xeon® processors, enables workload consolidation in edge environments on boards like congatec COM-HPC modules.

“The RTS Hypervisor can be easily configured to exactly meet your system requirements,” according to Christian Eder, Director of Product Marketing for congatec. “With the configuration file, which is basically an easy-to-generate text file, you can precisely assign computer resources and operating systems (OS) to different CPU cores and specify your preferences for the runtime environment. It is used as an input for the boot loader to partition the system into multiple systems.”

Combining #Intel’s 12th Gen Core processors with a strategy that incorporates workload-consolidation results in systems equipped with true #IoT hyperconvergence for mission-critical #edge and enterprise applications. @congatecAG via @insightdottech

The RTS Hypervisor supports virtualization technologies like Intel® Virtualization Technology (Intel® VT-x) and Intel® Virtualization Technology for Directed I/O (Intel® VT-d) for other devices, as well. It assures hard, uncompromised, real-time performance for the real-time OS that is running in parallel to other operating systems without interfering with time-sensitive functions and without adding any latency. For custom applications, RTS works directly with its customers to adapt the hypervisor for specific requirements. That could include deterministic solutions with multiple OSs.

Core-Specific Workloads on COM-HPC

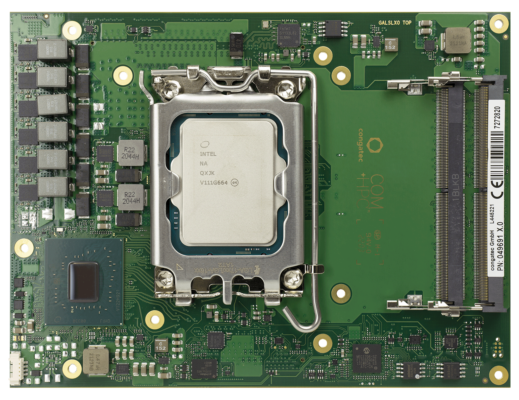

An example of a COM-HPC module that can take advantage of workload consolidation is the conga-HPC/cALS, which is suited for industrial environments (Figure 1). It boasts up to 16 processor cores, a maximum memory footprint of 128 GB, up to 2x 2.5 GbE connectivity, and support for time-sensitive networking (TSN).

The onboard 12th Gen Intel® Core™ processor ups the performance over previous generations using PCI Gen 5 on the recently announced COM-HPC platform, which opens the door to fastest graphics and advanced AI functionality. AI acceleration is made possible (and simplified) through the use of the Intel® Distribution of OpenVINO™ Toolkit and can be realized in a workload-consolidated system because of the strict management and separation provided by Intel processors and a real-time hypervisor like the one from RTS.

There are some limitations that potentially throttle back performance. For example, Ethernet technology can now scale to 100 Gbits or more, and COM-HPC can handle that with the Server-type modules. Along these same lines, TSN simplifies a design as it slices up the bandwidth and reduces the number of required cables, aiding in the design’s real-time communication, which is vital in many automation and robotics use cases.

In many applications, real-time communication is executed over a 5G medium. Enhanced with network slicing, a platform can operate wirelessly in Industry 4.0 real-time deployments. Regardless, the networking stack will need to be managed by an operating system, and that operating system cannot be subjected to inter-process disturbances or resource constraints if controlling something like a robotic automation system. In these cases, the combination of 12th Gen processors with hardware virtualization support and the RTS Hypervisor ensure that ample system resources are available to ongoing tasks.

“The latest revision of the Real-Time Hypervisor already takes the Performance and Efficiency cores into account. Designers can define the best-suited core type for their real-time application, for example, define a P-Core for performance-hungry real-time applications or assign the efficient E-Core to the RTOS for smaller workloads. The clock speed should also be fixed in order to always guarantee predictable schedules for real-time operation,” Eder says.

Catching Up the Software Stack

As you can see, combining Intel’s 12th Gen Core processors with a strategy that incorporates workload-consolidation results in systems equipped with true IoT hyperconvergence for mission-critical edge and enterprise applications. This even applies to mission-critical automation systems that reduce the risk of equipment causing harm to people or damage to property due to malfunction or incorrect operation.

“Of course, having one system instead of multiple systems also helps in terms of reliability; just think about MTBF,” Eder says. “The more components you have, the more components can fail. If it’s two individual systems, the MTBF is half of having just one system.”

The 12th Gen Intel Core processor allows for efficient workload handling, meaning that dynamic clocking and core assignment can be employed. Any real-time threads are hosted on a separated virtual machine on a core with a fixed frequency as required by the application, while the less mission-critical non-real-time threads can be handled on an “as needed” basis.

By managing this with technologies like Intel Thread Director and a rigid hypervisor, developers looking to make the most out of their systems with workload consolidation can do things like more effectively manage system power while providing full real-time capabilities. By maximizing the capabilities of modern hardware, the software team may have finally caught up.

This article was edited by Christina Cardoza, Associate Editorial Director for insight.tech.