Fill form to unlock content

Error - something went wrong!

Get the latest on IoT and network edge for manufacturing.

You are following this topic.

SR-IOV: Unleashing the Power of GPUs in IIoT

The graphics processing unit (GPU) has become a vital resource for the Industrial Internet of Things (IIoT). According to Waterball Liu, a Solution Product Manager at embedded solutions provider DFI, any application that requires highly intensive computing—such as machine vision processing, data analytics, or machine learning applications—can benefit from GPUs.

But the need for performance is particularly intense for multifunction platforms that combine demanding workloads like those mentioned above in a single system. Add graphical displays to the mix—a common feature in these consolidated systems—and GPUs become even more critical.

The challenge then becomes how to share the GPU.

Modern IIoT systems typically combine workloads using virtualization or containers, but both techniques create a performance-limiting bottleneck at the GPU level. It all comes down to complexity. Over time, virtualization technology has gradually expanded to include memory, I/O devices, networking, and storage—but not all hardware components can be easily virtualized.

Graphics technology is the best example: The high complexity of modern 3D rendering pipelines, the lack of unified instruction set standards among GPUs from different manufacturers, and the highly programmable 3D application programming interface make GPU drivers akin to high-level language compilers, which also increases the technical requirements of GPU virtualization.

For Industrial IoT platform developers whose primary objective is to get more out of a single system at the lowest cost and resource utilization, these hurdles often make building workload consolidated systems around GPU technology more trouble than it’s worth.

The Role of SR-IOV in GPU Virtualization

But if there’s ever a problem without a solution, technology will surely find a way.

For instance, the PCIe standard Single-Root I/O Virtualization (SR-IOV) defines a method for sharing a physical device by partitioning it into virtual functions. SR-IOV has already been widely used to virtualize network adapters, meaning it provides a programming model that is well understood and thoroughly field tested.

But when applied in a new context to a GPU, the technology gives each virtual machine (VM) or container access to a graphics function with near-native performance.

“SR-IOV reduces the overhead for virtualized environments,” explains Liu. “It makes nearly 100% of the GPU power available for virtualized applications.”

This simplified approach to #GPU acceleration opens up new possibilities for optimizing #industrial workloads. @DFI_Embedded via @insightdottech

SR-IOV In Action: A Performance Game Changer

In the past, the high-performance GPUs needed for industrial workload consolidation were available only as expensive discrete chips, which introduced unwanted cost to industrial systems. But today’s mainstream processors, like 12th Gen Intel® Core™ embedded processors, offer SR-IOV in its integrated graphics engines with considerable performance. “The integrated GPUs in Intel® processors provide a cost-effective and reliable solution for industrial computing, eliminating the need for additional discrete GPUs,” notes Liu.

This simplified approach to GPU acceleration opens up new possibilities for optimizing industrial workloads. Liu points to inspection systems as an example. “These systems require substantial computing power for AI-related tasks, such as defect detection and image recognition. With integrated graphics and SR-IOV, these systems can efficiently execute these applications with minimal system complexity,” he says.

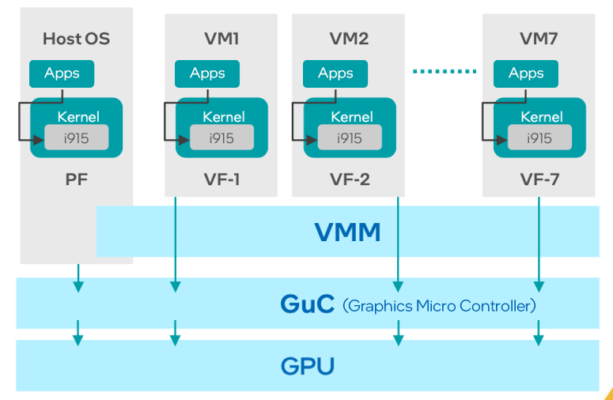

A 12th Gen Core processor outfitted with SR-IOV, for example, can support up to four independent displays and seven virtualized functions. Figure 1 illustrates how these capabilities can be accessed by up to seven VMs independently.

When it comes to real-world applications, the impact of SR-IOV is nothing short of remarkable, Liu explains. As the first company to validate SR-IOV on Intel processors with integrated graphics, DFI demonstrated the performance uptick by running two virtualized Windows 10 operating systems—one with SR-IOV and one without—on its ADS310 microATX board.

In the proof of concept, a video file was streamed from local storage through the two OSs and on to remote displays over Wi-Fi and 100 Mbps HDBaseT Ethernet. The installation without SR-IOV exhibited graphics throughput of roughly 28 fps, while the one equipped with it performed at 60 fps—a common target for smooth graphics rendering.

Of course, the performance augmentation brought about by SR-IOV is not confined to video streaming; the technology can be applied to a plethora of Artificial Intelligence of Things (AIoT) workloads in an industrial context. For example, the technology is at the heart of the DFI virtualized industrial automation and retail solutions.

“You now only need one computer to output to many screens,” Liu explains. “Imagine an industrial product line, where every manufacturing stage has its own display. The displays at every stage may only run temporarily.”

“In the past, such applications needed many computers or one computer with much more powerful and expensive discrete graphics adapter cards,” he continues. “But now, one Intel embedded processor can be used.”

A Promising Future for Efficient AIoT

Intel® Graphics SR-IOV is shaping up to be a potential game changer in industrial automation and AIoT applications. By enabling high-performance applications to run efficiently on integrated GPUs, it’s opening new avenues for efficiency and capabilities.

The potential benefits for AI are particularly enticing. “There are more and more AI applications that need powerful computing,” Liu states. “The applications are more and more complicated, with different functions. So, the new generation of processors and graphics will provide a more flexible and powerful solution for AI and other application needs.”

“With SR-IOV, we will open up a new chapter in IIoT development,” he concludes.

This article was edited by Christina Cardoza, Associate Editorial Director for insight.tech.