Need More IoT Data? Try Stream Processing

According to a recent IDC white paper, electronic systems will produce an incredible 160 Zettabytes (ZB) of data globally by 2025. Almost 25 percent of that data will be produced by real-time IoT devices. Unfortunately, a lack of available storage capacity means only a fraction of that real-time data can be saved.

The data explosion combined with limited storage capacity poses serious questions:

- How do you avoid losing valuable information and the insights it can provide while storing the smallest amount of data?

- How do you correlate events in real time so that your business can be proactive rather than reactive?

- How do you act on those correlations quickly enough to better serve customers and outpace competitors?

Steve Wilkes, CTO and Co-Founder of Striim, Inc., believes the answer is in-memory streaming data processing and analytics.

“If you can't store all the data—in fact, if you can only store a small fraction of it—you're left with the conclusion that you have to process and analyze the data in memory before it ever hits storage, in a streaming fashion,” says Wilkes.

In-Memory Streaming Data Processing and Analytics: The Basics

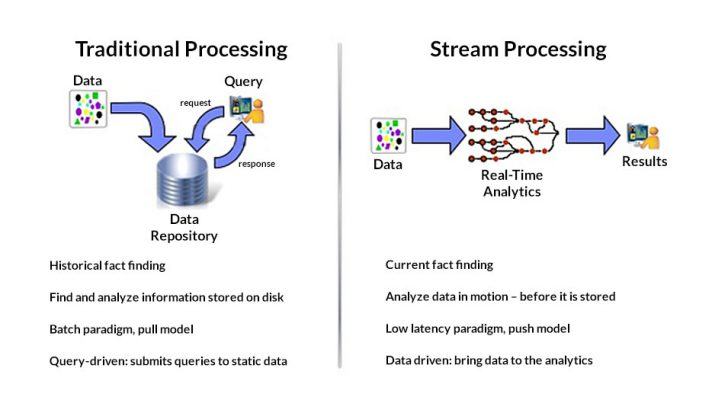

Stream processing allows continuous data flows to be analyzed in memory with only state changes exported to a file system or database (Figure 1). Known as change data capture (CDC), this procedure is particularly useful in an IoT context as it allows a system to identify relevant information while abstracting less-valuable data points.

“If you think about why we have batch processing of data, it's really because historically storage was cheaper than CPU and memory,” Wilkes explains. “The notion of batches is really an artifact from previous technology limitations.” But with both CPU and memory getting much cheaper, Wilkes continues, you can do change data capture and turn that into the data stream.

A good example of how stream processing and CDC can benefit an IoT deployment is a temperature monitoring application. Rather than blindly writing batches of identical temperature values to a database, stream processing and CDC compare recent machine logs to the last value in an associated data flow. If a new machine log contains a temperature reading that matches the last recorded value in a database, the log is discarded. If the new machine log contains a different temperature value, it is written to the database and the cycle repeats.

The obvious benefit of stream processing and CDC is that less storage is required because so much repetitive data can be ignored. The ancillary benefits include:

- Faster, more insightful analytics from smaller, more meaningful data sets

- Reduced network transmission costs associated with smaller data sets

- Better use of processor clock cycles since less time is spent analyzing batches of archived machine logs that are largely composed of duplicate information

Stream Processing From IoT Edge to Enterprise

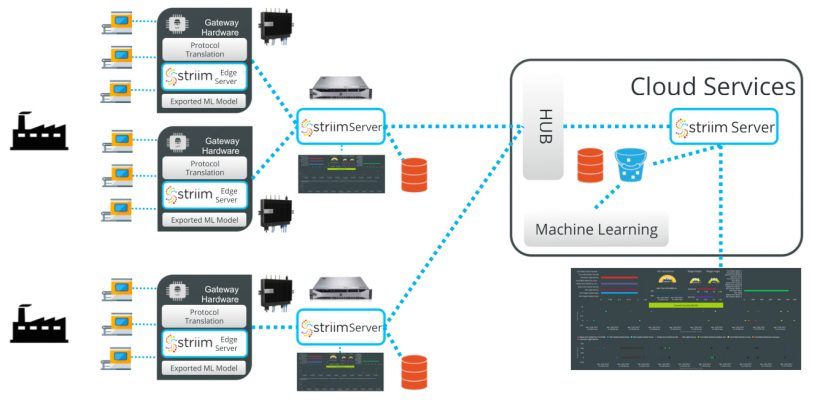

In IoT edge use cases, stream processing and CDC are often deployed in a gateway or on-premises server, which allow developers maximum data parallelism and compute density (Figure 2). In other words, applying CDC functions to a streaming input data set (or sets) that leverage the same processor I/O and memory resources helps optimize performance and reduce latency.

Gateway and server processors also tend to be multicore devices with ample on-chip memory and integrated GPUs or signal-processing capabilities. These characteristics are well aligned with the largely signal-based data streams flowing from sensors and actuators at the network edge, and also support computationally intensive workloads like machine learning (ML) that can be integrated into more complex event-processing data flows.

Based on these requirements, Intel® Core™ and Intel® Xeon® processors are ideally suited for stream processing.

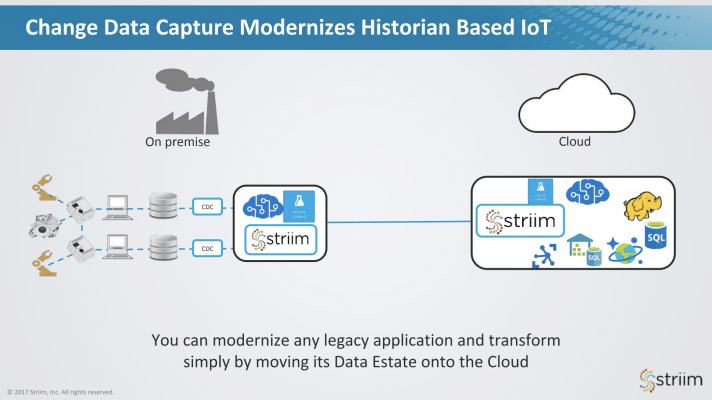

But beyond the edge, stream processing and CDC can also benefit the enterprise by modernizing existing systems with the architectural requirements of real-time IoT data. Enterprise historian databases comprising large amounts of operational data that has been aggregated over time can leverage CDC and stream processing to extend the same storage savings and reduced networking costs, and improved analytics capabilities possible in gateways or on-premises servers (Figure 3).

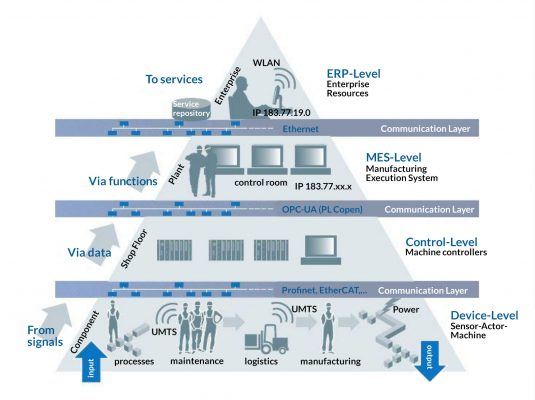

Industries such as manufacturing, healthcare, and cybersecurity that have traditionally required hours, days, or more to extract valuable information from large data lakes can therefore capitalize on an event-driven infrastructure that makes operational insights available in minutes or seconds. The overall result is more seamless, transparent data flow from north to south, east to west, or any direction that information needs to make its way across an IoT architecture (Figure 4).

“By utilizing change data capture within the framework of this architecture you can treat historian data as if it was real-time IoT data,” Wilkes says. “It's a way of modernizing your existing investments in manufacturing or other equipment that writes to a database.”

The Striim platform, for example, is an SQL-based streaming integration and analytics software suite that allows real-time, in-memory CDC to be applied from sensor nodes through to enterprise databases. Applications in the Striim environment are developed using data flows, which begin with a data source, are processed somewhere in the hierarchy using SQL, and written to a relevant file system, database, or cloud repository.

Striim integrates with many enterprise software tools to help facilitate the correlation of multiple complex data streams and deliver those outcomes into a compatible file or database format.

High-Velocity IoT Analytics Minimizes Storage, Maximizes Agility

With an increasing amount of real-time data being generated by IoT devices, organizations must weigh the cost of data retention, as well as the time required to manage and analyze vast amounts of data, against the actionable information it offers. As the majority of organizations are looking to capitalize on the newest, most valuable data, in-memory stream processing and analytics provides an alternative to data storage that minimizes capital and operational expenses while accelerating business agility and decision cycles.

“This streaming approach to things helps you move to ideas of transformation where you can integrate and join data in real time,” Wilkes says. Really, that's the final component of the IoT digital transformation we've all been waiting for.

To learn more about in-memory stream processing, watch the webinar “Addressing the Fundamental Challenges to IoT Data Management.”