Engineer in a Box: Deep-Learning AI for IoT Gateways

As sensing nodes increase, it doesn't take long for a trickle of data to become a stream, then a river, and soon a lake. Engineers tasked with managing machinery and systems can find themselves quickly overwhelmed.

General-purpose data platforms may not be much help. Instead, analysis software needs to "think like an engineer." That is, it needs a framework for handling the engineer's priorities of maintenance, trouble prediction, and troubleshooting.

This is exactly the goal Flutura had in mind when it created the Cerebra platform. Cerebra's key differentiator is that it presents data using an engineer's mental model.

"With Cerebra, we are able to translate the data lake into the engineer’s framework with an algorithm that detects frequent 'incidences' that would lead to [system] failure," said Derick Jose, chief product officer at Flutura.

The Engineer's Mental Model

For anyone with engineering training, Jose's description of the mental model within which Cerebra presents the data on an asset quickly looks familiar:

- What process is the asset following? (Clarify the operational intent and parameters.)

- What are the ways an asset could fail? (Define failure modes.)

- What's the nature of the interventions done on the asset within the past 90 days? (Track maintenance and repair history.)

- What are the ambient conditions of operation? (Are you in Siberia or Texas?)

- How often has it tripped an alarm? How often did that alarm go unattended? (Analyze historical behavior.)

"Unless you reflect this view of the world on the data product, it’s very difficult for the consumers of the information to process that and take an actionable insight out of it," said Jose.

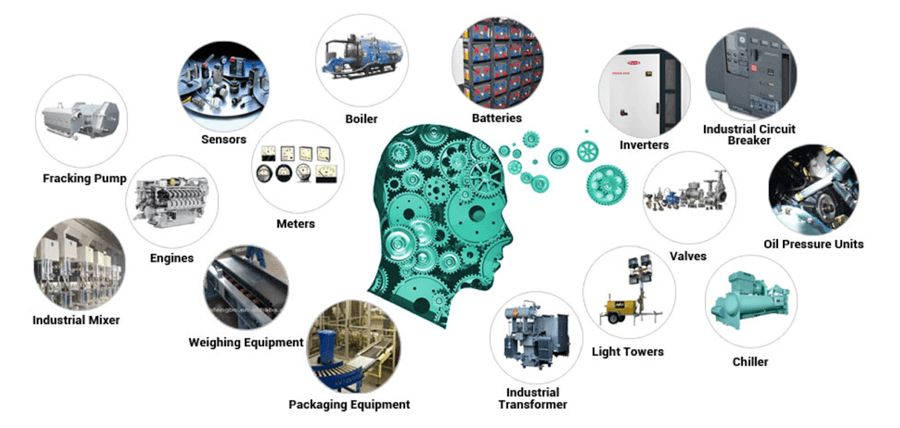

While the model can be applied to any IoT application, Flutura is currently focused on the energy and engineering industries (Figure 1).

Mapping Data to the Mental Model

The process of turning a raw data lake into an engineer's mental model is patent-pending. While the company can engage with a client to deploy everything from sensors through to the gateway, its core IP resides in its algorithm.

The algorithm begins by breaking down incoming data into multiple stream incidences, or instantaneous parameters. These could be alarms, trips, or state transitions that are then broken down according to the frequency and texture of the data. Cerebra then applies the relevant meta models of electrical, hydraulic, or thermal subsystems, their various fault modes, and the leading causes of those fault modes. Each meta model consists of 180 data elements spread across 65 entities.

"We translate all of the data lake into the Cerebra meta model, and once it has properly met the model, the engineer gets a complete view on the Cerebra platform," said Jose.

The inputs to the model could be sensors, ambient conditions, history of maintenance, historical events, and even the relationship of the asset to adjacent assets. Even if they aren't connected, a hot or electrically noisy motor can affect a nearby electronic board or system. Few systems operate in complete isolation.

High Sensitivity

The deep-learning algorithm is constantly at work breaking down the noisy data waveforms into what Flutura calls "feature vectors" and analyzing those. "We have about 220-plus waveform feature vectors which uniquely describe a wave, and that’s something that we have a lot of IP on," said Jose. "Once you start deep learning on these feature vectors, it tells the story of the asset much better than deep learning on the raw time-series data itself."

It's the sensitivity of the algorithm to small changes in the waveform and its ability to correlate those disturbances to failure sequences that have happened in the past that really distinguish Cerebra.

Lower Costs and New Revenue Models

Once the data is gathered and analyzed accurately and precisely over time, more good things come about. For example, the consumer of the data can be anyone, from the engineer on-site to a remote maintenance team. Where once a truck roll or a helicopter deployment would have been required for routine maintenance, the analysis determines if it's required or not, thus saving on overhead costs.

At a higher level, the data can be used to further reduce cost through improved quality and better processes. For equipment vendors, service contracts for sold or leased equipment can be rewritten based on geography and ambient conditions: North Dakota is different from Texas or Venezuela. Also, with regard to equipment liability, if a piece does fail in the field, the supplier can determine if it was being used under conditions not covered by the warranty.

Going further, predictive analysis is desirable as it can reduce downtime, but it also opens up the opportunity for equipment vendors and OEMs to develop "prognostics as a service," "monitoring as a service," or "diagnostics as a service" business models for additional – recurring – revenue.

"We call it the digital umbilical cord," said Jose. "So that's what we do."

Many would also call the ability to generate new, recurring revenue streams the "Holy Grail."

Putting the Smarts in a Box

Cerebra itself is hardware agnostic, but Flutura has already helped customers implement it on a Dell Edge Gateway 5000 Series, which uses an Intel Atom® processor (Figure 2).

While Cerebra is hardware agnostic and sits on the software stack on the edge device, it does depend on deep-learning algorithms to detect the most important features that lead to failure. Though the algorithms have yet to be benchmarked against the various processing architectures, Jose noted that performance enhancements could come about through the use of Intel processors. This is fortunate, given that "most of the gateways that we use, I'd say 95 percent of them, have Intel processors embedded inside," said Rick Harlow, executive vice president and head of Americas at Flutura.

Making the Most of the Engineer's View

Engineers tasked with monitoring, diagnosing, and fixing large-scale equipment, from factories to oil rigs, need to have information presented to them in a way that makes sense. Flutura's Cerebra machine intelligence platform uses deep-learning algorithms to present data using an engineer's worldview.

In doing so, equipment owners can reduce downtime and improve processes, while equipment vendors can add new, recurring revenue streams that include "prognostics as a service" and "diagnostics as a service."