Fill form to unlock content

Error - something went wrong!

Your content is just a step away. Please submit below.

Thank you!

Image Segmentation: Exploring the Power of Segment Anything

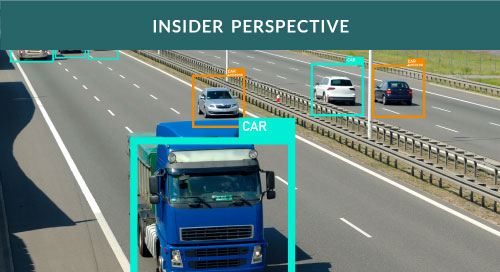

Innovation in technology is an amazing thing, and these days it seems to be moving faster than ever. (Though never quite fast enough that we stop saying, “If only I had had this tool, then how much time and effort I would have saved!”) This is particularly the case with AI and computer vision, which transform operations across industries and become incredibly valuable for many kinds of business. And in the whole AI/computer vision puzzle, one crucial piece is image segmentation.

Paula Ramos, AI Evangelist at Intel, explores this rapidly changing topic with us. She discusses image-segmentation solutions past, present, and future; dives deep into the recently released SAM (Segment Anything Model) from Meta AI (Video 1); and explains how resources available from the Intel OpenVINO™ toolkit can make SAM even better.

What is the importance of image segmentation to computer vision?

There are multiple computer vision tasks, and I think that image segmentation is the most important one. It plays a crucial role there in object detection, recognition, and analysis. Maybe the question is: Why is it so important? And the answer is very simple: Image segmentation helps to isolate individual objects from the background or from other objects. We can localize important information with image segmentation; we can create metrics around specific objects; and we can extract features that can help in understanding one specific scenario—all really, really important to computer vision.

What challenges have developers faced building image-segmentation solutions in the past?

When I was working with image segmentation in my doctoral thesis, I was working in agriculture. I faced a lot of challenges with it because there were multiple techniques for segmenting objects—thresholding, edge detection, region growing—but no one-size-fits-all approach. And depending on which technique you are using, you need to carefully define the best approach.

My work was in detecting coffee beans, and coffee beans are so similar, are so close together! Maybe there were also red colors in the background that would be a problem. So there was over-segmentation—merging objects—happening when I was running my image-segmentation algorithm. Or there was under-segmentation, and I was missing some of the fruits.

That is the challenge with data especially when it comes to image segmentation, because it is difficult to work in an environment where the light is changing, where you have different kinds of camera resolution. Basically, you are moving the camera, so you get some blurry images or you get some noise in the images. Detecting boundaries is also challenging. Another challenge for traditional image segmentation is the scalability and the efficiency. Depending on the resolution of the images or how large the data sets are, the computational cost will be higher, and that can limit the real-time application.

And in most cases, you need to have human intervention to use these traditional methods. I could have saved a lot of time in the past if I had had the newest technologies in image segmentation then.

What is the value of Meta AI’s Segment Anything Model (SAM) when it comes to these challenges?

I would have especially liked to have had the Segment Anything Model seven years ago! Basically, SAM improves the performance on complex data sets. So those problems with noise, blurry images, low contrast—those are things that are in the past, with SAM.

Another good thing SAM has is versatility and prompt-based control. Unlike traditional methods, which require specific techniques for different scenarios, SAM has this versatility that allows users to specify what they want to segment through prompts. And prompts could be point, boxes, or even natural language description.

“Image segmentation is one of the most important #ComputerVision tasks. It plays a crucial role in object detection, recognition, and analysis,” – Paula Ramos, @intel via @insightdottech

I would love to have been able to say, in the past, “I want to see just mature coffee beans” or “I want to see just immature coffee beans,” and to have had that flexibility. That flexibility can also empower developers to handle diverse segmentation tasks. I also mentioned scalability and efficiency earlier: With SAM the information can be processed faster than with the traditional methods. So these real-time applications can be made more sustainable, and the accuracy is also higher.

For sure, there are some limitations, so we need to balance that, but for sure we are also improving the performance on those complexities.

What are the business opportunities with the Segment Anything Model?

The Segment Anything Model presents several potential business opportunities across all different image-segmentation processes that we know at this point. For example, creating content or editing content in an easy way, automatically manipulating emails, or creating real-time special effects. Augmented reality or virtual reality is also a field that is heavily impacted by SAM, with the real-time object detection enabling the virtual elements in the interactive experience.

Another thing is maybe product segmentation in retail. SAM can automatically segment product images in online stores, enabling more efficient product sales. Categorization based on specific object features is another possible area. I can also see potential in robotics and automation to achieve more precise object identification and manipulation in various tasks. And autonomous vehicles, for sure. SAM also has the potential to assist medical professionals in tasks like tumor segmentation or making more accurate diagnoses—though I can see that there may be a lot of reservations around this usage.

And I don’t want to say that those businesses will be solved with SAM; it is a potential application. SAM is still under development, and we are still improving it.

How can developers overcome the limitations of SAM with OpenVINO?™

I think one of the good things right now in all these AI trends is that so many models are open source, and this is also a capability that we have with SAM. OpenVINO is also open source, and developers can access this toolkit very easily. Every day we put multiple AI trends in the OpenVINO Notebooks repository—something happens in the AI field, and two or three days after that we have the notebook there. And good news for developers: We already have optimization pipelines for SAM in the OpenVINO repository.

We have a series of four notebooks there right now. The first one we have is the Segment Anything Model that we have been talking about; this is the most common one. You can compile the model and use OpenVINO directly, and also you can optimize the model using the neural network compression framework—NNCF.

Second, we have the Fast Segment Anything Model. The original SAM is a heavy transformer model that requires a lot of computational resources. We can solve the problem with quantization, for sure, but FastSAM decouples the Segment Anything task into two sequential stages using YOLOv8.

We then have EfficientSAM, a lightweight SAM model that exhibits the SAM performance with largely reduced complexity. And the last resource, which was just posted in the OpenVINO repository recently is GroundingDINO plus Sam, called GroundedSAM. The idea is to find the bounding boxes and at the same time segment everything in those bounding boxes.

And the really good thing is that you don’t need to have a specific machine to run these notebooks; you can run them on your laptop and see the potential to have image segmentation with some models right there.

How will OpenVINO continue to evolve as SAM and AI evolve?

I think that OpenVINO is a great tool for reducing the complexity of building deep learning applications. If you have expertise in AI, it’s a great place to learn more about AI trends and also to understand how OpenVINO can improve your day-to-day routine. But if you are a new developer, or if you are a developer but you are no expert in AI, it’s a great starting point as well because you can see the examples that we have there and you can follow up every single cell in the Jupyter Notebooks.

So for sure we’ll continue creating more examples and more OpenVINO notebooks. We have a talented group of engineers working on that. We are also trying to create meaningful examples—proofs of concept that can be utilized day to day.

Another thing is that last December the AI PC was launched. I think that this is a great opportunity to understand capabilities that we are enhancing every day—improving the hardware that developers utilize so that they don’t need to have any specific hardware to run the latest AI trends. It is possible to run models on your laptop and also improve your performance.

I was a beginner developer myself some years ago, and I think for me it was really important to understand how AI was moving at that moment, to understand the gaps in the industry, stay one step ahead of the curve, improve, and to try to create new things.

And something else that I think is important for people to understand is that we are looking for what your need is: What are the kinds of things you want to do? We are open to contributions. Take a look at the OpenVINO Notebooks repository and see how you can contribute to it.

Related Content

To learn more about image segmentation, listen to Upleveling Image Segmentation with Segment Anything and read Segment Anything Model—Versatile by Itself and Faster by OpenVINO. For the latest innovations from Intel, follow them on X at @Intel and on LinkedIn.

This article was edited by Erin Noble, copy editor.