Systems Integrators Find New Ways to Direct City Traffic

San Francisco is just one example of a transportation landscape gone wild. Since 2018, vehicle traffic is up 27 percent. And alternative transportation sharing has skyrocketed. On an average weekday, city streets are filled with 6,300 bike shares; 2,000 mopeds; and 2,300 power scooters.

In addition to traditional forms of transportation, rideshare options like Uber and Lyft abound. While these new options make travel more convenient than ever, they’re pushing the limits of transportation management systems.

To keep up with such dynamic transportation trends, city managers need 21st-century solutions to optimize traffic planning, commuter and pedestrian safety, and emergency services well into the future—a notable opportunity for systems integrators (SIs) to build out their technology and service offerings and win new business.

Their city manager customers need better data to really know how one form of transportation influences another. They need situational awareness of events over a period of weeks, days, and hours—not years or decades.

To address these challenges, technology providers have developed intelligent transportation systems (ITS). Because IoT and artificial intelligence (AI) technologies are crucial for real-time transportation management. And for SIs who don’t have ready access to them or deep knowledge of how they work, solutions aggregators can help.

Layers of Management

For this particular use case, existing sensors like traffic monitoring cameras give transit managers a head start. The next step is deploying a tiered data intelligence architecture within ITS solutions.

Justin Bean, Global Director of Smart Spaces and Video Intelligence Marketing at Hitachi Vantara, explained how.

“With computer vision and machine learning, we’re able to analyze existing data and transform it into a wealth of insights,” he said. “For example, we can look at the composition of traffic. How many bikes, cars, trucks, and buses are on the street? Where are cars parking? What is the flow of people on sidewalks and in crosswalks?”

This type of computer vision requires a large amount of processing performance that isn’t available on legacy cameras already in the field. Instead, edge gateways or servers can be used. These systems compile video streams, apply computer vision algorithms in real time, and send relevant metadata back to operations centers for more analysis.

One solution that enables computer vision on existing cameras is the Intel® NUC. The compact platform provides the compute and graphics performance required for tasks like automatic license plate recognition (Figure 1).

The next, and most influential, tier of data intelligence is a complete visualization suite. These software applications integrate data from sensors, gateways, and other traffic management systems. As a result, transit managers can view both real-time video streams and long-term traffic trends through a single pane of glass.

Open Framework Brings It Together

The challenge for SIs and city managers is combining these infrastructure components. For instance, different cameras and sensors leverage a mix of communication protocols and data formats. This can result in silos of information that limit the ability of transportation management systems to provide real-time feedback.

Connecting this infrastructure to visualization and analytics dashboards requires an end-to-end data acquisition strategy. And it must be based on a scalable ITS design that isn’t constrained by proprietary solutions or dependent on technologies that could soon become obsolete.

IoT application frameworks that use device connectors are one way to solve the problem. Connectors are a thin layer of software that take in data from one system and repackage it for interpretation by others. In this way, data can make its way from edge systems to cloud visualization platforms.

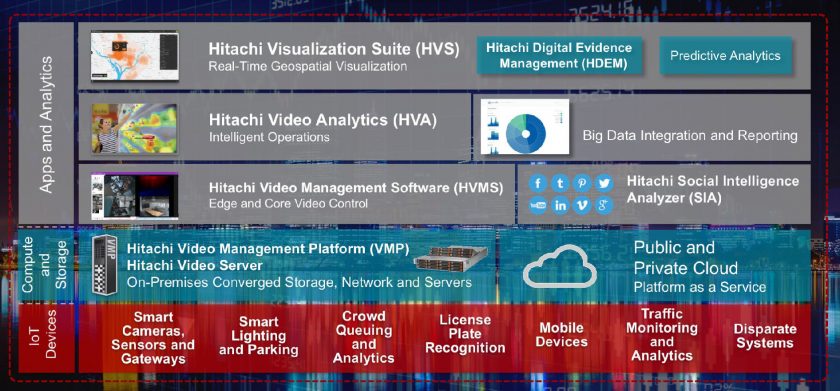

Hitachi Vantara has integrated one such framework into its Smart Spaces and Video Intelligence platform (Figure 2).

The Hitachi platform is an ultra-scalable smart city management solution that provides a loose data integration framework based on a series of connectors. Hitachi has worked with device-makers to write connectors for their platform, regardless of a device’s native communications protocol or original data format.

“Our application framework provides services that acquire multiple types of data,” explained Bean. “It turns that into metadata, which is sent through, for example, JSON format. It orchestrates that data appropriately, and pools it in a data lake.”

“That type of time-series data is easy to display on a map,” Bean said. “But if we want to tap into a video stream, we can just go directly into the footage itself.”

See how the city of Moreno Valley, California leveraged the solution to improve both traffic management and public safety (Video 1).

Trends Analysis with Computer Vision and AI

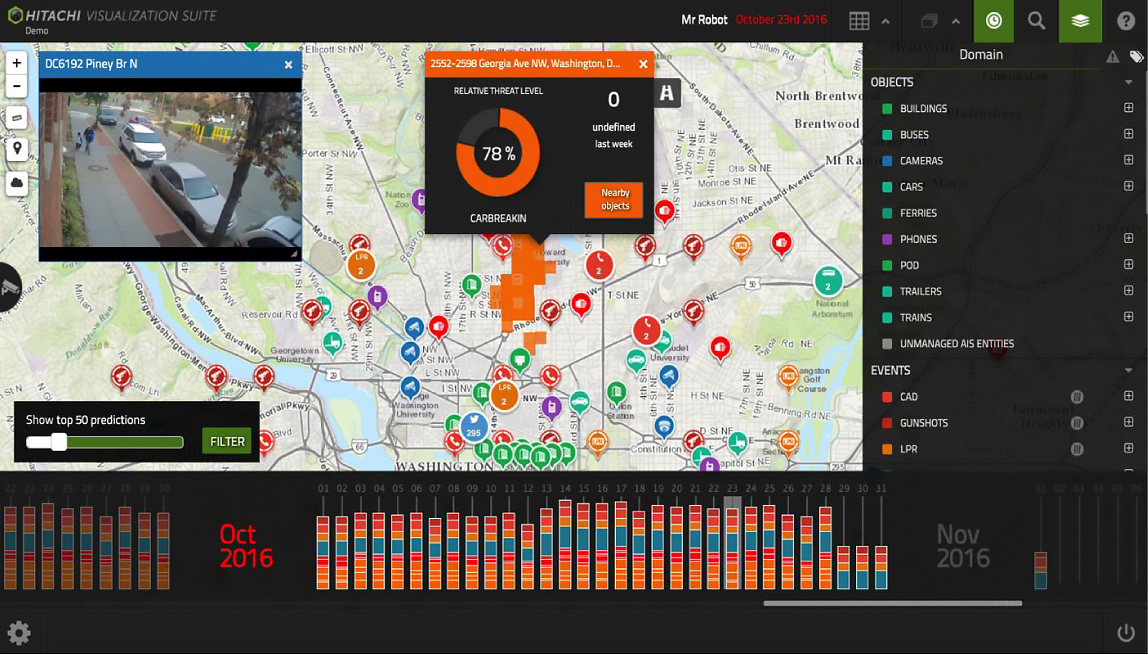

Using this framework, video analytics streams and other transportation data can be drawn into the Hitachi Visualization Suite (HVS). HVS is a smart management dashboard that supports countless layers of data to help traffic operators assess the transit environment in real time and for the long term (Figure 3).

HVS integrates both historical time-series data and real-time video streams into the same dashboard. This information can be presented in geospatial views to help transit officials visualize traffic trends.

These layers can help authorities make best use of transportation resources. HVS also allows customizable configuration of data sets into charts, graphs, and other formats, so you can help operators group one type of information with other traffic trends.

“You can also feed data into real-time applications like parking guidance apps so people know where parking is available,” said Bean. “This helps operators understand traffic flow in real time so they can adjust light timing. It also provides visibility into incident locations, pedestrian flow, transit options, and much more.”

Another important solution component is Hitachi Video Analytics, which augments the edge analytics delivered by platforms like the Intel NUC. It supports functions such as people and vehicle counting, traffic analysis, and parking detection. It also allows users to fast-forward, rewind, and search for specific objects or events that may be buried in hours’ worth of video.

Smarter Transit, Smarter Cities

The openness of Hitachi Smart Spaces and Video Intelligence allows it to merge nicely with existing transportation systems. This helps your city planner customers can maintain their investments in hardware, software, and connectivity. But the solution becomes a force multiplier for smart city management when it integrates with other city management systems.

Extending the connector concept further brings even more opportunities. Data can flow between transportation systems, utilities delivery, emergency services, and other civic information repositories. And the ability to visualize it in real-time means you can be instrumental in helping cities to design policies that make urban areas cleaner, safer, and more convenient than ever before. A value that goes way beyond the traditional SI functions of selling, integrating and servicing.