What If Embedded Systems Had Unlimited Storage?

Data acquisition systems have always faced fundamental limitations of storage capacity and processing power—but new thinking on database structure can free developers from old constraints. Specifically, high-fidelity data storage at the edge combined with modern data access APIs can free architects to develop more flexible and useful systems.

The Problem with Constrained Thinking

Common data acquisition and analysis challenges include figuring out:

- What to sense

- How frequently to sense it

- Where to store the data

- How long to store it

- How to access it on-demand for analysis

The problems arise in part because designers’ thinking is constrained by classic design considerations such as limited available memory to store the data, limited network bandwidth, unreliable connectivity across heterogeneous networks, power consumption concerns, and processing power.

This constrained thinking is typical but has three negative side effects. The first is that as soon as decisions are made, system rigidity starts to set in. IoT node deployments that make sense today may be outdated in three to five years as new thinking, technologies, and analysis requirements emerge.

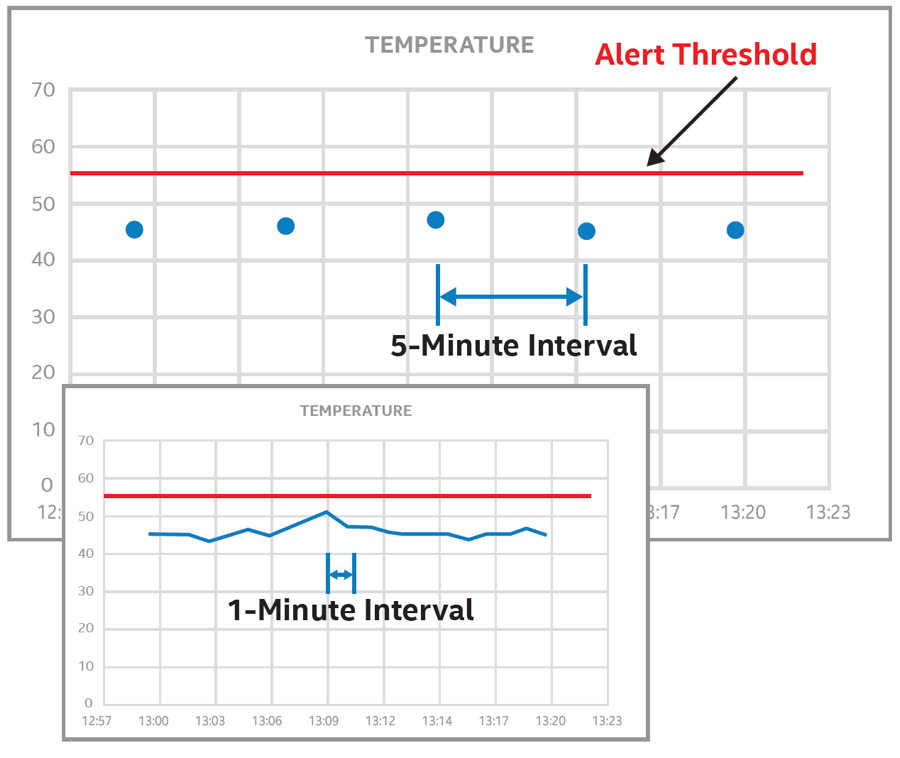

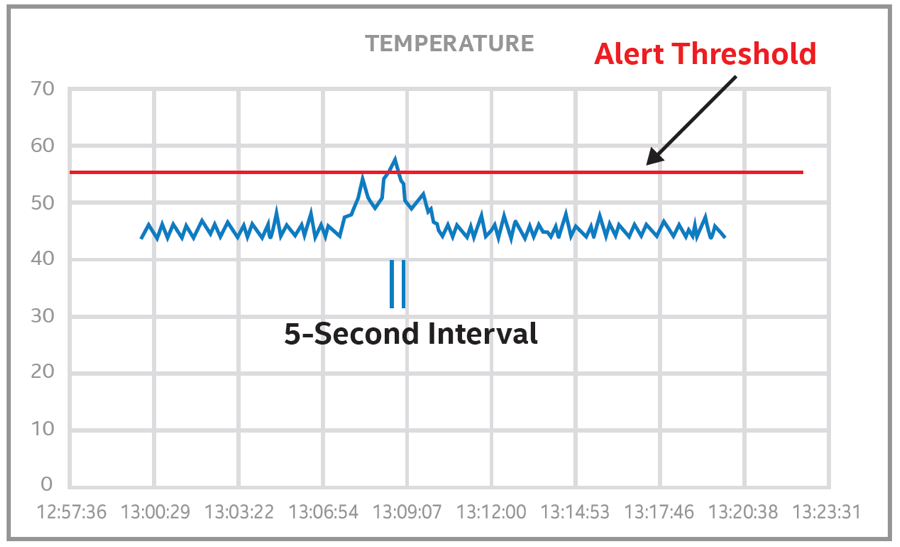

The second is that designers commit to sampling and recording specific data inputs at the lowest possible frequency to conserve limited memory resources. In doing so, they run the risk of missing important events (Figure 1a). With shorter intervals between samples, the odds of detecting an important event increase (Figure 1b).

Figure 1a: Low-frequency sampling can fail to record data needed to determine the cause of a malfunction. (Image source: Exara)

Figure 1b: Shorter intervals increase the odds of detecting an event. (Image source: Exara)

The third, and the one that’s most potentially frustrating and damaging from an analysis and process-improvement point of view, is the lack of high-resolution, high-fidelity historical data. While the low-resolution data is recorded and can be analyzed at a future point, much of the data that wasn’t recorded is lost, forever.

This unrecorded data could play a critical role in uncovering anomalies that lead to an asset’s failure. With the right algorithm, the data may also prove to be extremely useful to improving system reliability, efficiency, and the business itself. Its loss is effectively a lost opportunity.

Is Unlimited Storage Possible?

What if designers didn’t have to compromise and could gather all the machine data at a frequency of Hertz instead of deciHertz? What if they no longer had to make long-term commitments to data gathering and analysis but could adjust on the fly? Sometimes, if it sounds too good to be true, it might still actually be true.

By combining the advantages of modern 64-bit CPUs like the Intel Atom® processor family and some novel thinking about databases, Exara is taking steps to remove the old constraining framework within which classic data-acquisition and analysis systems were designed. It is doing so right at the network edge through an abstraction layer between the sensors and operation technology and the application or enterprise layer.

Eric Kraemer, founder and chief technology officer at Exara, put it best. “The real world is very messy,” he said. “Consensus changes, equipment gets swapped out or breaks, and nodes are put up and pulled down.” That’s everyday unpredictability, but there’s an added factor: rigidity.

“As soon as we make an assumption, we create rigidity,” said Kraemer, and the “machine data service” he and his team at Exara has developed is designed to eliminate that rigidity at the edge of the network and help move the industry as a whole toward full digitization with a software-defined IoT ecosystem.

Any Data, Any Time, Any Where

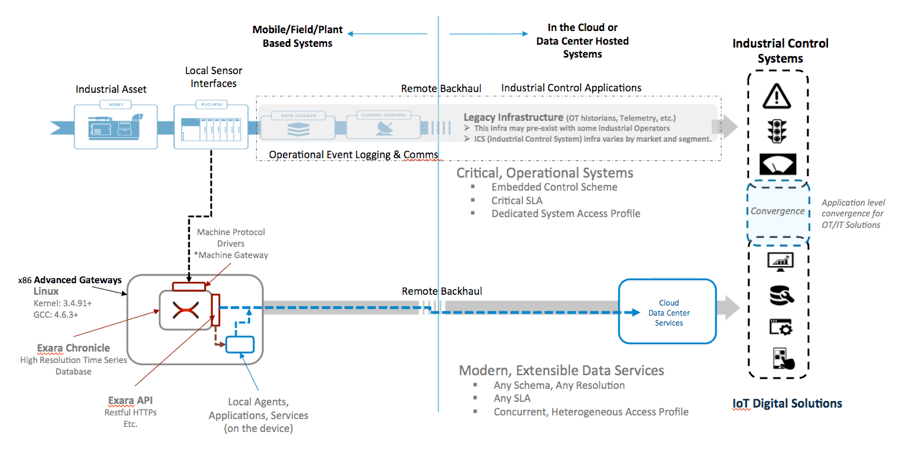

Exara’s solution taps into the edge network at the point at which sensors inputs are aggregated, such as on the PLC or RTU (Figure 2). From here, the network is viewed as either southbound or northbound. Southbound is operational technology (OT) territory, comprising the assets being sensed, monitored, and controlled with a wide range of machine-level protocols (Exara supports up to 300). Northbound comprises the APIs and application layer that allow extraction and presentation of the required data in application-ready, “just in time” fashion.

Figure 2. Exara’s Chronicle taps into local sensor interfaces, stores all data in high fidelity, and allows access through APIs. (Image source: Exara)

Between the southbound and northbound domains, Exara has developed a time-series database architecture that acquires and compresses all the machine data coming from the assets, and then stores it, buffer-like, right at the edge. Using standard or custom APIs, the data can then be pulled as needed by the consumer (e.g., the enterprise IT or business office).

“The bottom-line summary is, we connect directly and read native machine protocols,” said Kraemer. The amount of data captured is scalable by adding more memory, but for starters it can sample a thousand tags (sensor inputs), at an average sampling resolution of one Hertz and capture “tens of billions of event logs” on a 256-Gbyte SSD, said Kraemer. “That’s several orders of magnitude larger capacity for time-series event logging than legacy data acquisition devices.”

More important, Exara’s approach is built for concurrent read access and to present that data to modern application development ecosystems through standard APIs. “Your traditional industrial data-acquisition devices are not,” he said. Kraemer compared Exara’s Chronicle software to a typical high-end system costing $4,000, which he said could log about 2 million events.

The critical enabling factor for Exara’s solution is the emergence of low-cost 64-bit processors like the Intel Atom processor family. These CPUs can address larger memory spaces. As memory costs go down and performance increases, higher-bandwidth interfaces such as SATA Gen 2 become critical for storage. “Remember, we’re talking about processing a lot of data,” said Kraemer.

Chronicle itself is essentially a high-performance, time-series, analytical database that is written in C so it runs very close to the kernel. To date, developers have depended on embedded transactional databases that are repurposed to be used as relational databases. But analytical and transactional databases differ in terms of performance characteristics, access patterns, and the types of requests they get. “If we want to run apps reading from the industrial edge, the core thing we need to establish is database infrastructure,” said Kraemer.

Scalable and Secure

By abstracting storage and analytics from the rate of change at the physical layer, Exara’s approach is scalable as the network grows, as well as in terms of how much data is stored, and for how long. The typical timeframe is three years’ worth of data, and the architecture uses a “rolling window” buffer approach, so there is always three years’ worth of data stored. That is usually sufficient to allow analysis of previously untapped data to determine the cause of any anomalous behavior or to apply new analysis algorithms on that data. But if a customer requires a longer storage timeframe, simply adding more memory can scale it up.

From a security point of view, Chronicle is “read only” so it doesn’t give access to the installed system, and the data itself is accessed through modern, secure APIs.

To date, Exara has already shown to one customer how it can save $60,000 per year on one horizontal pump by optimizing its use to reduce power consumption.

Conclusion

The deployment of IoT systems is getting easier as the technology advances and developers become more knowledgeable of its application. But the classic framework within which design decisions are made can still lead to network rigidity and lack of sufficient data at the network edge to perform optimal analysis.

New thinking around the role of databases and the application has shown that they can help abstract analysis from the physical layer and lead to more available data. It also makes the data itself more accessible for presentation in real time, and more useful for historical analysis to identify anomalous behavior and improve processes and outcomes.

The application of this thinking takes advantage of the falling cost of 64-bit architectures like the Intel Atom® processor family and ever-growing memory capacities. Using Exara’s Chronicle as a starting point, designers can now start working on a software-defined approach to IoT deployment.